0. Cryptography as Civilizational Physics

Cryptography defines who can do what to whom, when, and under which assumptions. Two incompatible uses:

- Crypto protects states/platforms/AI-governed infrastructures from people.

- Keys are escrowable, revocable, centrally issued.

- Ledgers/identities become editable by decree.

- Crypto protects people and voluntary networks from institutions and adversaries.

- Keys are non-escrowable property.

- History becomes expensive to rewrite; coordination does not require central trust.

What world does this protocol instantiate by default?

Names (machinery suppliers): Diffie & Hellman, Merkle, Rivest–Shamir–Adleman, Goldwasser–Micali, Yao, Boneh, Schneier, Anderson.

1. Threat Modeling First: Adversaries, Not Algorithms

Primitives are meaningless without a threat model.

1.1 Assets

- Confidentiality: plaintext, keys, internal state, and metadata (who/when/how often/where/volume).

- Integrity: ledgers, logs, contracts, system state, models and parameters.

- Availability: ability to transact, communicate, and compute when desired.

- Identity power: the capacity to act as an identity (sign, spend, vote).

1.2 Adversaries

- Commodity attackers and malware reuse.

- Organized crime / corporate espionage.

- Nation-states: passive global capture, active manipulation, legal coercion (“lawful intercept”).

- AI-augmented control stacks optimizing behavior shaping (metadata + inference).

- Insiders: admins, validators, HSM operators, devs.

1.3 Capabilities

| Mode | Examples | Failure signature |

|---|---|---|

| Passive | Wiretaps, traffic capture, long-term storage | Harvest-now / decrypt-later; metadata graph |

| Active | MITM, injection, downgrade, replay, censorship | “Secure session” that is secretly proxied |

| Endpoint | Malware, firmware trojans, baseband, compromised RNG | Crypto “works” while keys leak upstream |

| Legal / economic | Regulation, subpoenas, standards capture | Trust roots become policy levers |

| Coercive | Physical pressure, extortion | Keys become hostage assets |

1.4 Layers of failure

- Math: break assumptions (factoring, discrete log, lattices).

- Protocol: secure parts assembled into insecure flows.

- Implementation: bugs, timing/cache/power/EM side channels.

- Ops / UX: key loss, phishing, misconfig, insecure defaults.

- Ontological: protocol design encodes centralization and control.

A proof that a primitive is secure rarely survives naive composition. Real systems must be analyzed as compositions; composable frameworks exist because naive composition fails.

2. Symmetric Cryptography: Shared Secrets, Real Work

2.1 Model

- C = EK(M), M = DK(C)

- Bulk data lives here: AES (block), ChaCha20 (stream).

2.2 AEAD: confidentiality and integrity

- Non-negotiable in hostile environments: never deploy raw encryption without authentication.

- Examples: AES-GCM, ChaCha20-Poly1305.

2.3 Randomness, nonces, KDFs

- Key entropy: 128-bit baseline; 256-bit for long horizon / PQ margin.

- Nonce reuse (especially AES-GCM) is catastrophic.

- Passwords need KDFs (scrypt/Argon2) or the key space collapses.

2.4 Side channels

- Timing, cache, power, EM, branch patterns leak.

- Constant-time implementations for key-dependent operations are mandatory.

3. Asymmetric Cryptography: Identity & Authorization at Distance

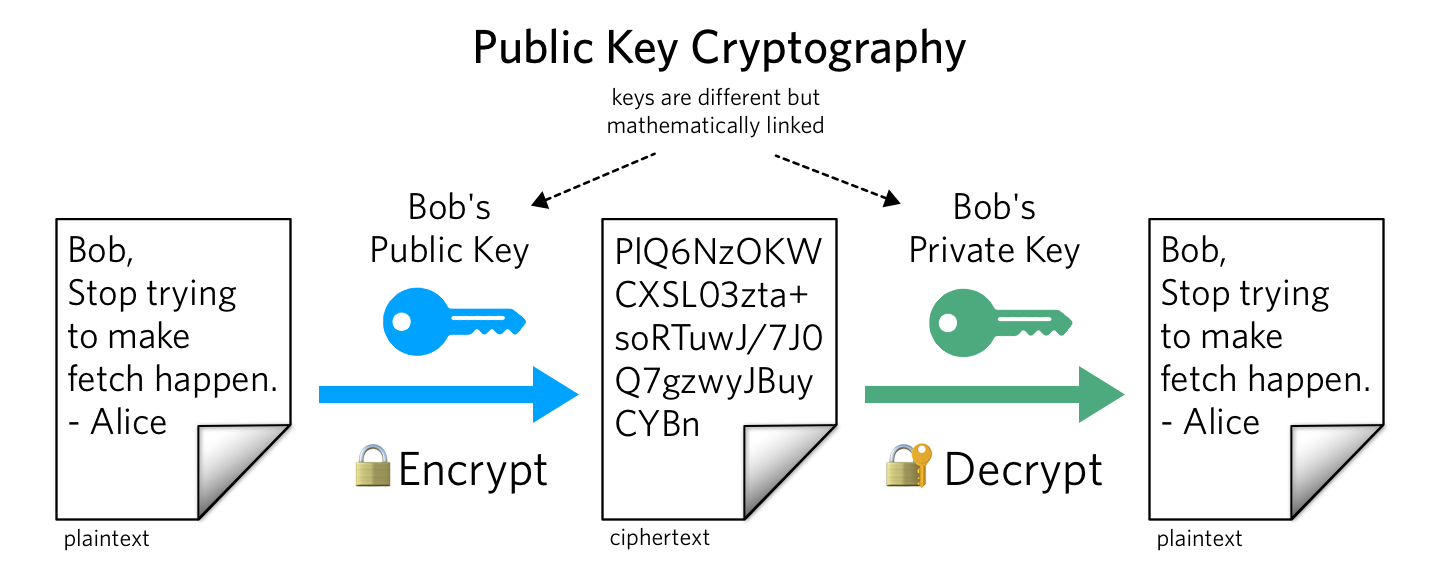

3.1 Public / private key pairs

- Public key (PK) distributes; private key (SK) is guarded.

- Two use-patterns: public-key encryption; digital signatures.

- Backed by trapdoor one-way functions.

3.2 RSA and ECC

- RSA: hardness from factoring n=p·q (with correct padding).

- ECC: elliptic-curve discrete log; smaller keys for equivalent security.

- Signatures: ECDSA, EdDSA, Schnorr. Key exchange: X25519/X448.

3.3 Curve governance

- Some parameters are opaque; others are verifiably generated (“nothing up my sleeve”).

- For sovereignty: prefer transparent generation, broad scrutiny, and crypto-agility.

4. Key Exchange: Birth of Shared Secret on Hostile Wires

4.1 Diffie–Hellman

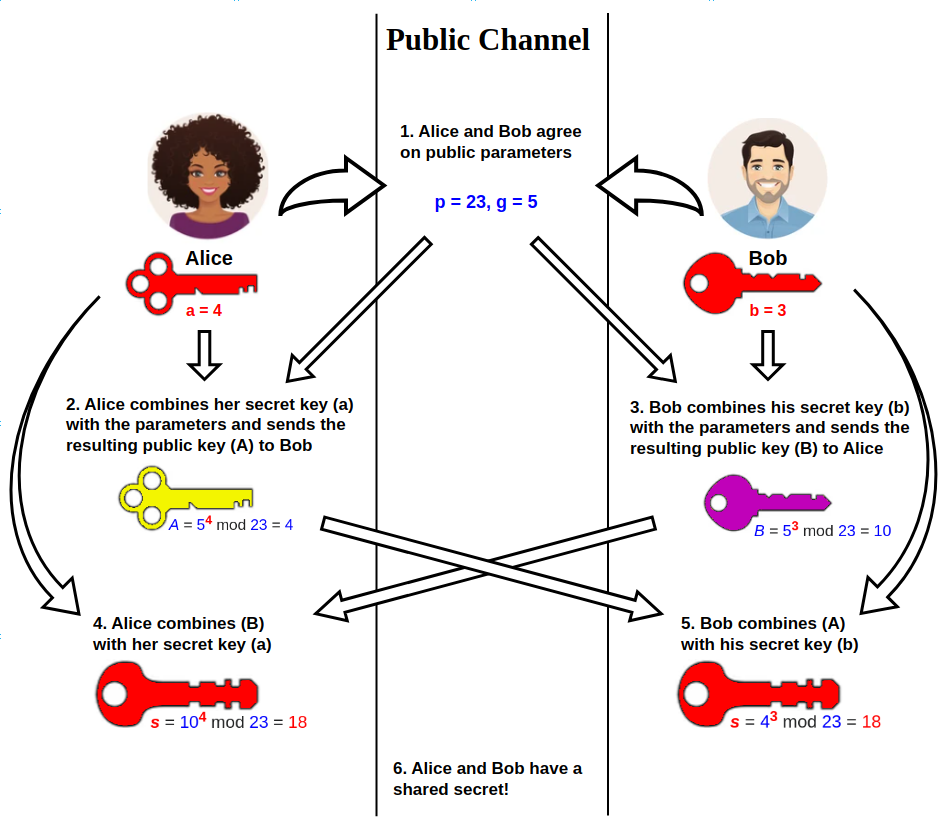

Public parameters: p, g. Alice sends A = ga mod p; Bob sends B = gb mod p. Both derive K = gab mod p.

4.2 Authentication and MITM

- Raw DH defeats passive attackers only.

- Active attacker can MITM and establish separate keys with each endpoint.

- Fix: bind exchange to authentication (sign DH values, PSK, certificates, etc.).

4.3 Forward secrecy

- Ephemeral DH: fresh keys per session (or message).

- Without FS: “harvest now, decrypt later” becomes default.

4.4 Merkle’s computational asymmetry

- Merkle puzzles: early asymmetry ideas; ancestor of later public-key intuitions.

- Modern echoes: hash-based and memory-hard constructions.

5. Hash Functions & Merkle Trees: Commitment, Memory, Time

5.1 Properties of cryptographic hashes

- Preimage, second-preimage, collision resistance.

- Uses: commitments, fingerprints, MAC/KDF/PRF building blocks.

- MD5/SHA-1: collision-broken → migrate (SHA-2/SHA-3/BLAKE2/…); keep agility.

5.2 Random oracle vs reality

- Proofs often assume an ideal random oracle.

- Real hashes approximate the ideal; they are structured algorithms.

- Therefore: algorithm choice cannot be frozen forever.

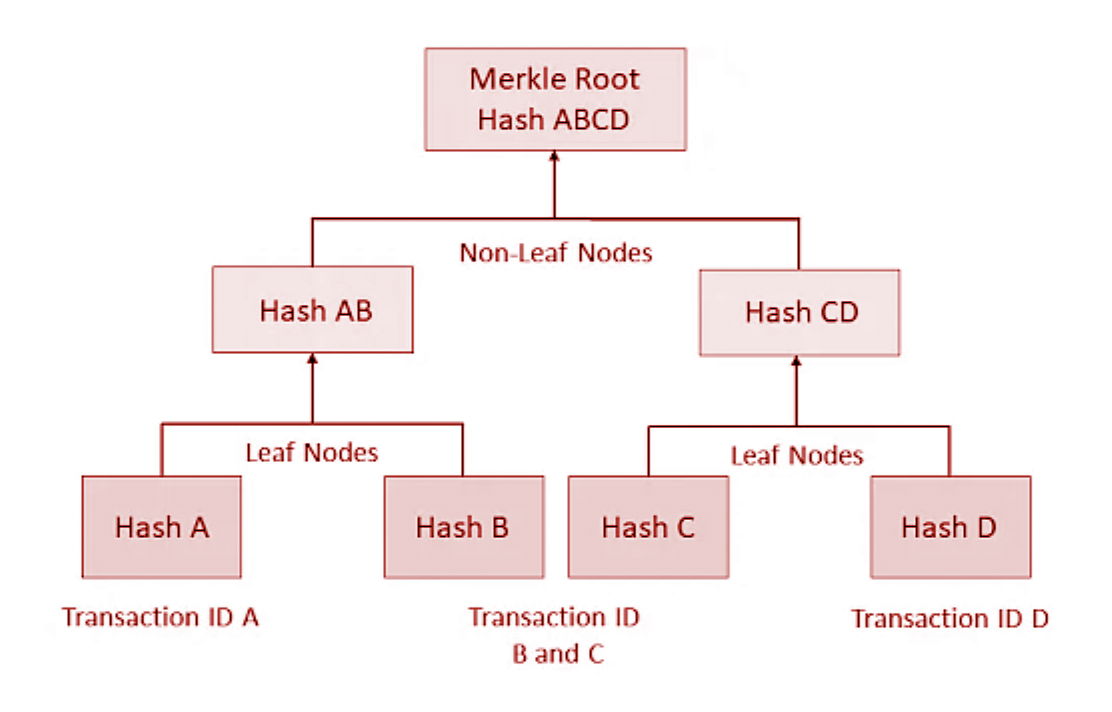

5.3 Merkle trees and time-chained state

- Root hash commits to an entire dataset; membership proofs are O(log n).

- Blockchains: blocks commit via Merkle roots, chained by previous block hash.

- Hash + Merkle + economic cost = expensive-to-rewrite memory.

6. Digital Signatures & Key Lifecycle: Authority at the Edge

6.1 Signature schemes

- KeyGen → (SK, PK), Sign(SK, M) → σ, Verify(PK, M, σ).

- Schemes: RSA-PSS, ECDSA, Ed25519, Schnorr.

6.2 ECDSA nonces: microscopic detail, catastrophic failure

- Nonce reuse/bias/predictability can leak the private key from signatures.

- Mitigation: deterministic nonces (RFC6979-style) reduce RNG dependence.

6.3 Crypto vs legal “non-repudiation”

- Crypto claim: valid signature implies “someone with SK signed M.”

- Reality: shared keys, server signing, coercion break “intent.”

- Sovereign stance: treat signatures as capability tokens; use multi-sig/threshold/auditing for accountability.

6.4 Key hierarchies and thresholds

- Split roles: spending vs viewing vs governance keys.

- Threshold / multi-sig: M-of-N authorization; distribute compromise impact.

- Lifecycle planning: generation, use isolation, backup, rotation, revocation, inheritance.

7. Zero-Knowledge Proofs: Verifiable Truth, Hidden Reason

7.1 Definition

- Completeness, soundness, zero-knowledge.

- Goldwasser–Micali–Rackoff: “knowledge” becomes an object of proof.

7.2 SNARKs and STARKs

- SNARKs: succinct proofs, fast verify; often need a trusted setup.

- STARKs: transparent (no trusted setup), hash-based; larger proofs.

- Other families: Bulletproofs, Halo, … trade-offs matter.

7.3 Uses

- Private transactions, attribute proofs, verifiable computation.

7.4 Double-edged: privacy vs opaque governance

ZK can either preserve individual privacy under inspectable rules, or become a mathematical veil for unaccountable policy. Circuits/constraints must be transparent and forkable; contestability must exist.

8. Secure Computation: Joint Functions Without Data Surrender

8.1 Problem

Inputs x₁…xₙ, output y = f(x₁…xₙ): learn y, learn nothing else.

8.2 Yao’s garbled circuits (2PC)

- Express f as a Boolean circuit, garble wires with symmetric keys.

- Use oblivious transfer to deliver only input-consistent keys.

- Evaluation yields output without revealing other inputs.

8.3 Secret-sharing MPC

- Split secrets into shares across parties; operate on shares.

- Security depends on adversary model (semi-honest vs malicious) and collusion thresholds.

8.4 Homomorphic encryption (HE)

- Compute on ciphertexts (partially or fully homomorphic).

- Enables analytics/ML without raw data release (but see leakage).

8.5 Leakage through outputs + metadata

MPC/HE protect inputs syntactically, but repeated queries, chosen functions, timing/abort patterns, output distributions, and metadata can reconstruct sensitive information. Output-leakage modeling is part of the design.

9. Governance: PKI, Standards, Hardware, Toolchains

9.1 PKI and certificate authorities

- Classic web trust: OS/browser roots; X.509; CA mis-issuance enables impersonation.

- Alternatives/augmentations: TOFU (SSH), web-of-trust, CT and key transparency logs.

- Sovereign direction: reduce reliance on single global root sets; make key history visible and contestable.

9.2 Standards and RNGs

- “Approved” ≠ trustworthy against all adversaries.

- RNG is a first-class primitive; treat it as an attack surface.

- Crypto-agility: keep the ability to swap primitives without central permission.

9.3 Hardware, TEEs, and supply chain

- TEEs/HSMs/TPMs can isolate keys but often rely on vendor firmware + attestation services.

- Risks: vendor revocation, remote update coercion, public vulnerabilities, pre-shipment implants.

- Sovereign conclusion: TEEs are replaceable optimization layers, not ultimate roots of trust.

10. Post-Quantum Cryptography: Algorithm Lifetimes

- Shor: breaks RSA/ECC (factoring/discrete log).

- Grover: quadratic speedup vs symmetric/hashes → increase key sizes.

Mitigations

- Symmetric: larger keys/digests (e.g., AES-256; 256-bit hashes).

- Asymmetric: migrate toward PQ schemes (lattice/code/hash-based, etc.).

- Hybrid schemes during migration (classical + PQ).

Cryptography is perishable infrastructure with an explicit half-life. Plan upgrade paths that do not depend on a single central authority mandating changes.

11. Composability: Secure Primitives ≠ Secure Systems

Components can be provably secure under stated assumptions and still fail when assembled. In real stacks: multiple protocols, nested channels, side effects, side channels, and human interfaces.

- Each new layer must inherit and respect the assumptions beneath it.

- Mismatches (key reuse across contexts, colliding trust models, degraded randomness) are attack surfaces.

12. The Thinkers as Law-Givers

- Diffie & Hellman: key exchange over hostile networks.

- Merkle: authenticated structures → global logs, blockchains, time-anchored commitments.

- RSA: scalable public-key crypto + signatures.

- Goldwasser & Micali: semantic security + zero-knowledge foundations.

- Yao: secrecy generalized from messages to functions (secure computation).

- Boneh: modern applied crypto operationalization; threshold/pairings/real protocols.

- Schneier: security is socio-technical; incentives and systems are usually the weakness.

- Anderson: security engineering as a discipline; real failure modes catalogued.

13. Integrated Stack: From Primers to Sovereign Infrastructure

- Assumptions & threats: passive/active adversaries, endpoint compromise, legal coercion, AI inference.

- Primitives: AEAD, strong randomness, careful nonces/KDFs; public-key with transparent parameters; hashes/Merkle; signatures with nonce discipline.

- Protocols: authenticated ephemeral key exchange + forward secrecy; authorization via signatures + thresholds; ZK with auditable circuits; MPC/HE with explicit output leakage models.

- Governance & toolchain: auditable/forkable key infrastructure; contestable standards; supply chains verifiable where possible; minimal TCB.

- Civilizational semantics: keys as property; ledgers as expensive history; protocols that resist centralization and remain forkable under hostile governance.

The primitives are neutral. The composition, governance, and threat model are not.

Resources (linked)

Each item below is linked and referenced inline where it fits best.

[R1] Katz & Lindell — Introduction to Modern Cryptography

Core definitional spine: IND-CPA/IND-CCA, MACs, signatures, key exchange, reductions.

Open[R2] Goldwasser & Bellare — Lecture Notes on Cryptography (PDF)

Provable security and reductions; compressed theorem-book complement to [R1].

Open PDF[R3] Boneh & Shoup — A Graduate Course in Applied Cryptography (PDF)

Modern applied bridge: AEAD, key exchange, signatures, protocol realities (v0.6, 2023).

Open PDF[R4] Ferguson, Schneier, Kohno — Cryptography Engineering

How to not wreck primitives: RNGs, key mgmt, protocol design, implementation pitfalls.

Open[R5] Ross Anderson — Security Engineering

Socio-technical security: hardware, payment systems, incentives, real failure modes.

Open[R6] Dan Boneh — Online Cryptography Course

Structured lecture path through symmetric, public-key, hashes/MACs/signatures, protocols.

Open[R8] Berkeley RDI — Zero Knowledge Proofs (MOOC, Spring 2023)

Modern ZK backbone: classical ZK → SNARKs/STARKs → programming + applications.

Open[R9] Diffie & Hellman (1976) — “New Directions in Cryptography” (PDF)

Origin of public-key cryptography and Diffie–Hellman key exchange.

Open PDF[R10] Rivest, Shamir, Adleman (1978) — RSA paper (PDF)

Practical public-key cryptosystems + digital signatures.

Open PDF[R11] Merkle — “Secure Communications Over Insecure Channels”

Merkle puzzles and early authenticated structures: conceptual ancestors of later systems.

Open[R12] Goldwasser & Micali — “Probabilistic Encryption”

Semantic security and probabilistic encryption foundations.

Open[R13] Goldwasser, Micali, Rackoff — ZK foundations

Formal zero-knowledge via simulation; definitional anchor.

Open[R14] Yao (1982) — “Protocols for Secure Computations” (PDF)

Seminal MPC/secure computation framing (includes “millionaires problem”).

Open PDF[R14b] Yao (1986) — “How to Generate and Exchange Secrets” (PDF)

Extended abstract; foundational 2PC/garbled-circuits lineage.

Open PDF[R15] Matthew Green — “Zero Knowledge Proofs: An Illustrated Primer”

Intuitive geometry before algebra: commitments, ZK flow, why it works.

Open[R17] Lindell (2021) — “Secure Multiparty Computation” (CACM)

Survey of MPC moving from theory to practice; threat models + modern protocols.

Open[R18] Cramer, Damgård, Nielsen — Secure Multiparty Computation and Secret Sharing

Comprehensive formal text on MPC and secret sharing (Cambridge Core).

Open[R19] Hazay & Lindell — Efficient Secure Two-Party Protocols

Efficient 2PC techniques and constructions (SpringerLink).

Open[R20] Adam Shostack — Threat Modeling: Designing for Security

Repeatable threat modeling discipline (assets, attackers, abuse cases, STRIDE).

Open[R22] Schneier — “The Psychology of Security” (PDF)

Security theater, cognitive bias, and why “feels safe” ≠ “is safe.”

Open PDF[R23] Phillip Rogaway — “The Moral Character of Cryptographic Work” (PDF)

Cryptography as power redistribution; post-Snowden critique of field alignment.

Open PDF[R24] Cryptography FM — Podcast

Research-grade interviews and deep dives into schemes, attacks, standards.

Open[R26] Zero Knowledge — Podcast

ZK, privacy protocols, decentralized infra; strong entry points into modern proving systems.

Open[R28] FRONTLINE — “Global Spyware Scandal: Exposing Pegasus”

Why endpoint compromise dominates naive “crypto = safe” thinking.

OpenOptional media pairings for system-level threat realism: Citizenfour • The Internet’s Own Boy